Promise-making and score-begging is something we see more often than we would like to, particularly from companies who only pay lip-service to Customer Experience. Let me tell you about an experience I had recently, which is somewhat funny…

My car was due for service, so I googled the nearest Renault franchise. Landing in their page the “Service” section was prominent, and the online booking was recommended. Lovely! – I booked for the following Thursday 9:00 and requested the “pick-up & drop-off at home” option.

Thursday came. It was 30 mins past 9:00 and no one showed up, so I decided to call. The person who picked up the phone asked me if I had received a confirmation call. I said I wasn’t even aware I should expect one. But surely expected they called if they could not accept my booking.

She laughed at me when I said I used online booking and requested pick-up/drop-off at home…

📞 “Yeah… you know, too many online requests and the person dealing with them is too busy. You should always call. As for pick-up/drop-off service, we can’t really do that.“

“Hum… I see. But your website recommends online booking, and has the option for pick-up/drop-off“, I said.

📞 “Yeah… I know nothing about internet. Do you want to book the service with me?“

Of course I did, and asked what was the next available slot / day.

📞 “Next Tuesday 9:00… can you please give me your name, email, phone, address, car maker, model, registration, chassis number…”

I knew this was going to happen. It was so obvious!… “But I provided all that info in the online webform“

📞 “Sir, as I said, I know nothing about internet. Do you want to book the service or not?“

I was a bit annoyed by the tone, but I needed the service, so I provided all the details again, and booked it.

Tuesday came and I was there at 10 mins to 9:00 AM (had a conference call at 9:30 AM, so wanted to drop it off quickly and go back home).

It took me 40 mins (!!) to drop the car. Mostly because I had to provide all the information again to the front desk person: name, email, phone, address, car maker, model, registration, chassis number…

Whilst I was waiting for him to type everything into his computer, I looked around and saw the below 😮

The Renault network promises to…

1 – Reply to your online booking in less than 24 hours

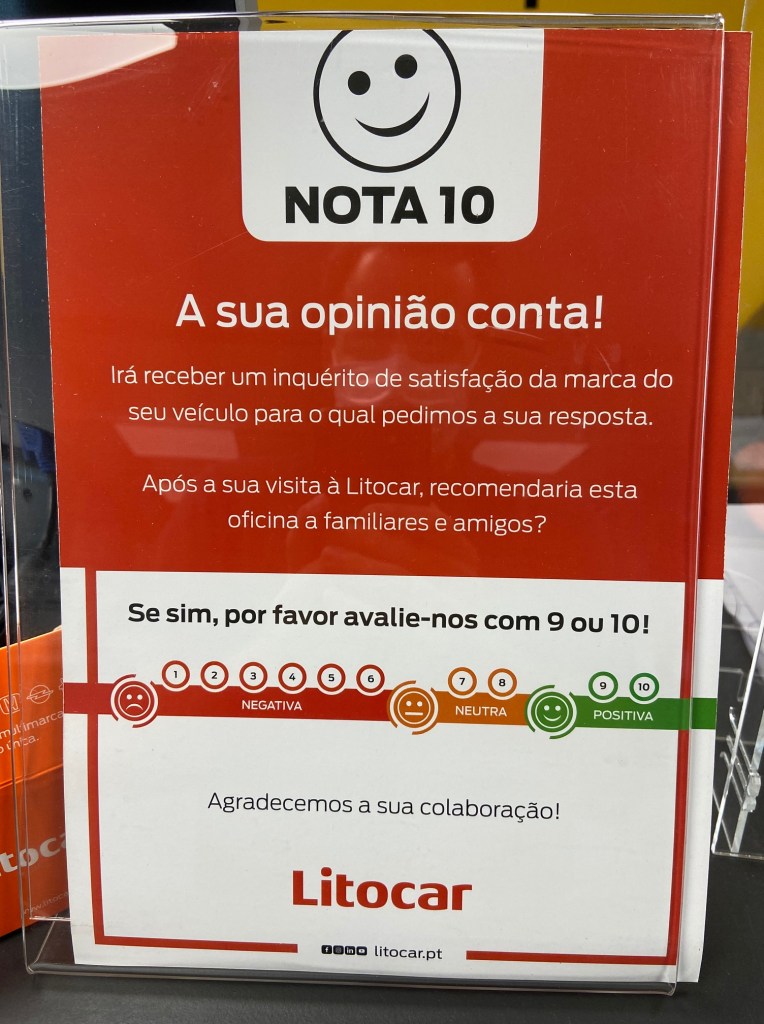

And on the other end of the counter was the below 🤔

Your opinion counts! You will receive a CSAT survey… please give us a 9 or 10

As I said initially, Renault is paying lip-service to Customer Experience. Making promises they cannot (or even make no effort to) keep, and begging for scores they don’t deserve.

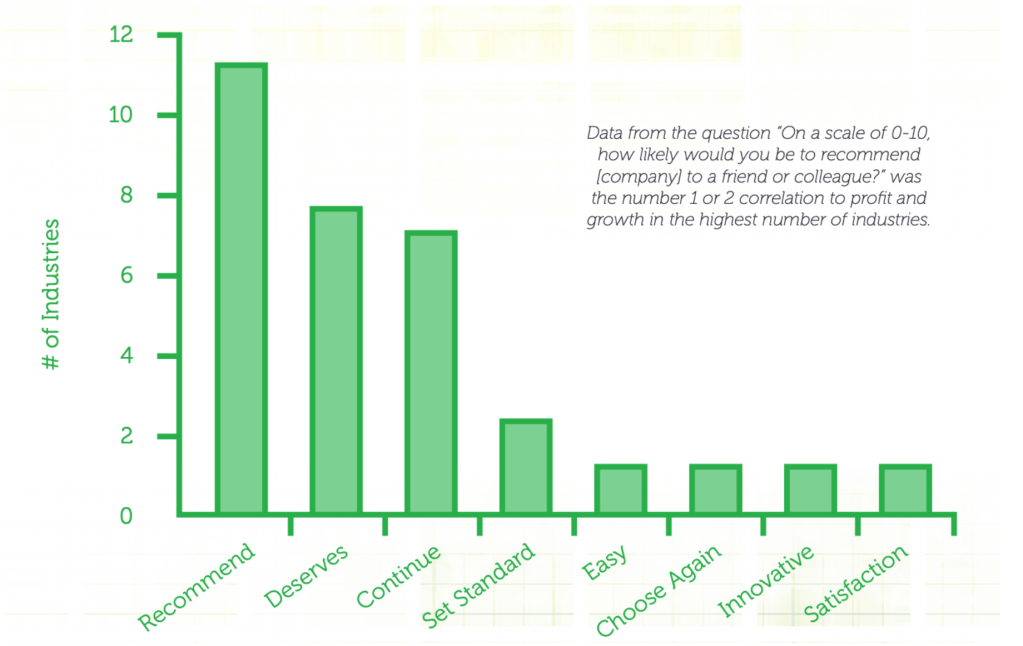

Truth is whoever is creating these initiatives seldom understands that they actually have the opposite outcome. They think this way:

- By showing we are customer focused…

- And asking for good feedback…

- Customers will give us a high score…

- Others will see it, and come as well.

But in reality, this is what happens:

- Customers see promises you’re not interested in keeping…

- And go through high-effort & below-par experiences;

- Realise you only care about appearances…

- And resent your cheekiness of asking a high score…

- Giving you a bad score, not coming back, and telling their friends

In the meantime, Renault lost a great opportunity to understand what their gaps are and either fix issues or improve experiences. For example:

- Does Renault know the person dealing with online bookings is overwhelmed?

- Does Renault know there isn’t enough staff to provide pick-up / drop-off?

- Does Renault know employees are duplicating customer information in different systems?

- Does Renault know customers are being hassled into providing their information over and over?

Funny thing is, in my humble opinion, most of these are actually easy fixes, that would have a massive impact on customer experience, and consequently on the scores that Renault is begging from customers.